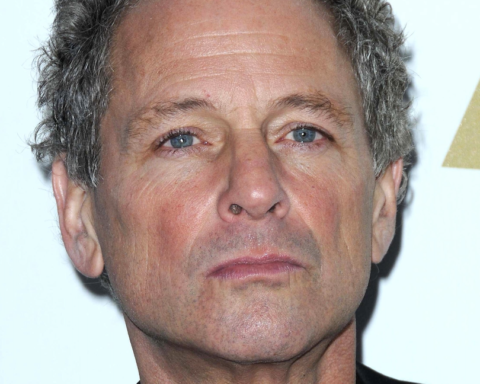

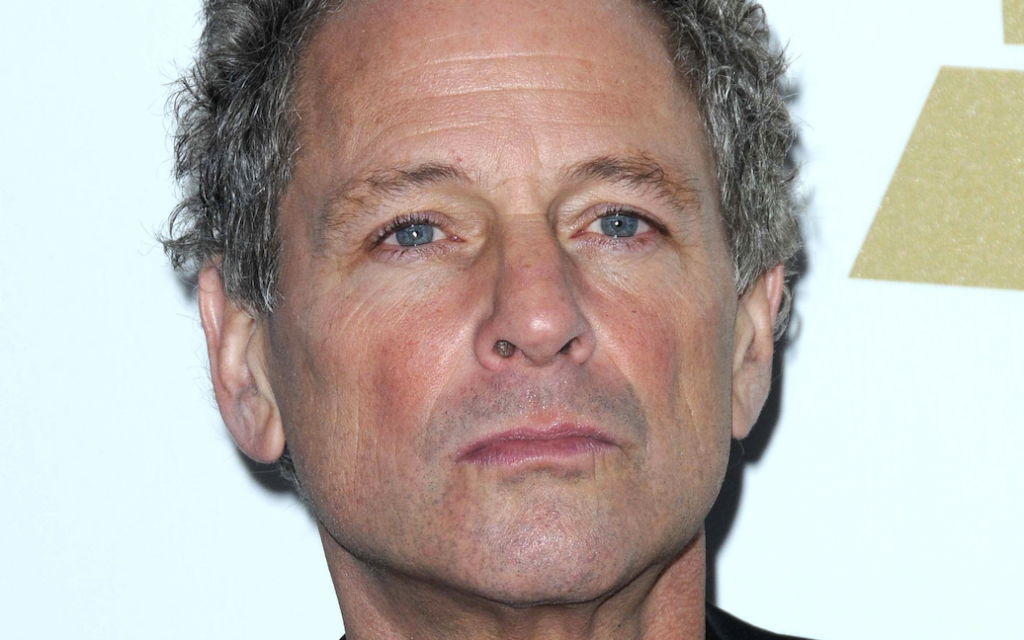

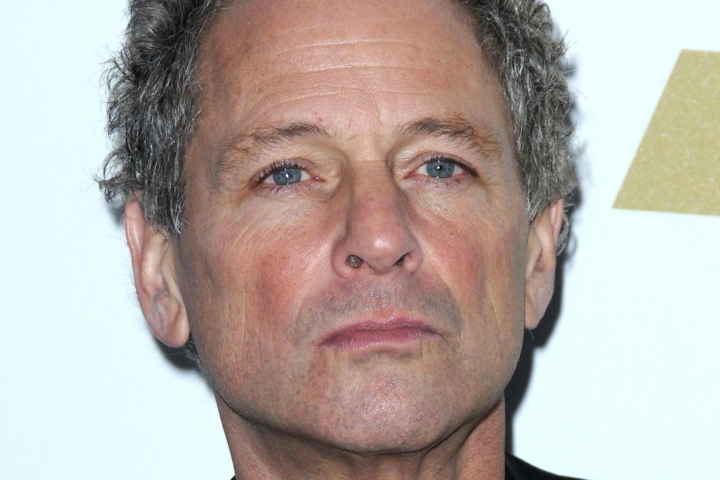

As artificial intelligence becomes capable of generating technically “correct” sound, the role of sound designers is undergoing a quiet but significant transformation. For sound designer Shuo Shen, this shift is not an abstract technological discussion but a reality she has encountered across film, broadcast, gaming, and emerging media productions.

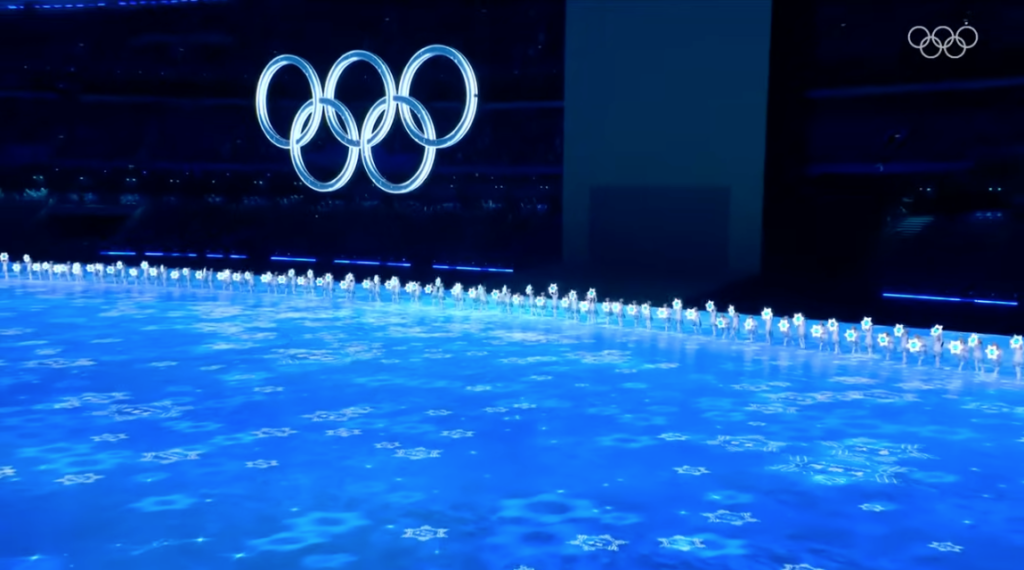

From the official documentary The Ceremony of the Beijing Winter Olympics to major broadcast productions such as Chinese Idol: Our Song, and from brand sonic identity systems to experimental AI-generated films, Shen’s work consistently situates sound at the center of evolving audiovisual practice. Across these diverse environments, collaborators increasingly rely on experienced sound designers not merely to execute sound, but to determine how audiences ultimately experience a work.

Her practice reflects a broader industry question now emerging worldwide — what kind of sound remains necessary when machines can already generate it?

I. The Arrival of the AI Cinema Era: From Generative Systems to Digital Humans

The years 2025–2026 are widely regarded within the industry as the beginning of cinema’s generative era.

AI platforms such as Seedance and Hailuo have reached a stage where complete audiovisual sequences can be produced directly from text prompts, including synchronized soundtracks. Technically, these sounds are often flawless: spatial relationships are coherent, environments match accurately, and sound sources are precisely positioned.

Yet as filmmakers began working with these materials, a shared realization quickly emerged — AI-generated sound may be correct, but it is rarely expressive.

It can match images, yet struggles to build emotional tension.

It can produce sonic events, yet fails to understand rhythm and dramatic timing.

It can simulate reality, yet rarely conveys authorial intention.

Sound remains descriptive rather than interpretive — explaining what happens instead of guiding how audiences feel.

This industry-wide challenge became central to the fully AI-generated film Jin Chan Zi, a recent project involving Shen as sound designer.

Built upon AI-generated imagery, Jin Chan Zi represents a growing trend: internationally awarded filmmakers are beginning to experiment with AI production while actively inviting experienced sound designers into the creative process. The shift reveals a deeper transformation — as image production becomes automated, directors increasingly rely on sound to restore authorship.

During production, AI supplied numerous automated sound solutions. Shen’s role, however, was not only technical correction but aesthetic judgment: removing sounds that were “overly correct,” restructuring rhythm, and shaping the audience’s emotional trajectory through sound.

Sound moved from post-production support to narrative foundation.

As Shen summarizes:“AI can generate sound, but it doesn’t know when silence is necessary.”

II. AI Does Not Replace Creators — It Restructures the Industry

Across discussions within the Academy of Motion Picture Arts and Sciences technology forums and the broader professional sound community, a consensus has gradually emerged: artificial intelligence is unlikely to eliminate creators; instead, it is reshaping the hierarchy of creative labor.

Traditionally, the sound industry operates as a pyramid structure.

At the base lies execution and large-scale production work; above it sits technical integration; at the top are narrative judgment and aesthetic decision-making — the layer where authorship ultimately resides.

Generative AI is rapidly absorbing the lower tier. Automated ambience generation, sound matching, preliminary editing, mixing assistance, and standardized design workflows can now be completed with increasing efficiency. For Shen, this shift is not surprising. Having worked at the intersection of sound design, audio technology, and emerging media systems, she has closely followed the development of large-scale generative models and actively experimented with AI-driven audio production pipelines.

Unlike many creative practitioners who encounter AI only as a tool, Shen approaches it with an understanding of how models are trained and where their limitations originate. Her recent experiments across AI film projects and generative audio systems led her to a clear conclusion: current audio foundation models remain significantly less mature than language models.

While large language models demonstrate strong contextual reasoning and semantic coherence, audio models still struggle with higher-order expressive control. They can produce technically plausible sound, yet often fail to sustain emotional continuity, dynamic intention, or rhythmic precision. The result is audio that appears correct on a technical level but rarely enhances the audiovisual experience in a meaningful way.

“AI can generate sound events,” Shen explains, “but it still has difficulty understanding why a moment should feel tense, intimate, or silent.”

From her perspective, generative AI is highly effective at handling repetitive and standardized tasks — including automated advertising sound production and large-volume content workflows — lowering technical barriers and expanding participation within the industry. However, cinematic sound creation operates at a different level, where storytelling intention and perceptual psychology define value.

“AI isn’t replacing sound designers,” she notes. “It replaces the parts that don’t require judgment.”

As automation expands, elite sound designers increasingly shift from technical executors to creative decision-makers. AI produces possibilities; humans determine meaning. Paradoxically, the more production becomes automated, the more scarce — and valuable — aesthetic judgment becomes.

III. How Judgment Is Built: From AAA Games to National-Level Image Production

Such judgment did not emerge with the arrival of artificial intelligence. It was formed through years of industrial practice across fundamentally different production environments.

While working on the international AAA game Persona 5: The Phantom X, Shen was not designing sound for an individual title alone but for an expandable sonic universe. Players entering any new environment needed to recognize instantly that they remained within the Persona world — a task requiring long-term system thinking rather than isolated creative decisions. Sound had to function as continuity, identity, and navigation simultaneously.

A different form of responsibility emerged during her work as sound editor on the official documentary of the Beijing 2022 Winter Olympics Opening Ceremony, The Ceremony. Documentary sound operates under historical constraints: events cannot be recreated, and artistic intervention must coexist with authenticity. Here, sound becomes the audience’s primary access point to collective memory, demanding restraint as much as creativity.

The pressure intensified further in live broadcast production. Serving as live sound designer for Dragon Television’s New Year’s Eve Gala, Shen worked within an environment defined by immediacy and unpredictability. Stadium acoustics, performer variability, audience response, broadcast transmission, and stage mechanics evolve continuously in real time. There is no opportunity for revision — only instantaneous judgment.

Through these experiences, Shen developed a perspective shaped not only by artistic practice but also by observation of technological change within the industry. She acknowledges that recent advances in large language models have already begun transforming certain aspects of audio production. AI-generated dialogue and voice synthesis, for instance, increasingly offer viable alternatives to traditional ADR recording in controlled post-production scenarios.

Yet her experience suggests a clear boundary.

Large-scale live broadcasts, entertainment events, and complex multi-signal productions remain deeply resistant to automation. Each performance carries unique acoustic conditions, shifting emotional pacing, and rapidly changing technical demands. Decisions must respond to human behavior — performers adjusting timing, audiences reacting unpredictably, directors modifying cues moments before transmission.

“In live environments,” Shen observes, “sound design is less about generating audio and more about understanding situations.”

Current AI systems lack the contextual awareness, situational responsiveness, and cross-department coordination required in such settings. Every program demands different priorities: vocal clarity for one artist, spatial energy for another, or controlled crowd response for broadcast storytelling. These moment-to-moment judgments rely on accumulated experience rather than predefined models.

Shen observes that the industry remains in a transitional period. While generative tools continue to advance, a substantial volume of traditionally produced film and television projects remains in long production cycles, and directors frequently continue to rely on experienced sound designers for complex productions requiring nuanced creative judgment. At the same time, professional adoption of AI systems demands new forms of expertise, as practitioners must learn not only how to operate these tools but how to integrate them meaningfully into established workflows.

Together, these high-stakes environments shaped Shen’s defining capability: the ability to make decisive creative and technical choices within complex systems. Such competence does not arise universally among sound designers; it develops through sustained engagement with productions where artistic intention, technological control, and real-world uncertainty must operate simultaneously.

IV. The Commercial Field: Sound as a Brand Identity System

Even before the AI wave transformed media production, Shen was deeply involved in brand and commercial sound design, developing a distinctive perspective on sonic strategy.

Sound, in her view, is not merely a carrier of content — it can function as a core component of brand identity.

In the Snack Paper campaign for Bestore, rather than using realistic chewing sounds, she designed metallic-textured fracture effects, transforming the sensation of “crispness” into an instantly recognizable auditory signature. Sound evolved from accompaniment into brand asset.

A collaboration between Honkai: Star Rail and Keep presented an even more complex challenge: sound needed to simultaneously serve the game’s narrative universe, the fitness platform’s brand tone, and the public persona of featured artists.

In an era of algorithmic content saturation, a three-second sonic signature can often be remembered more easily than an image.

These projects reflect a broader transformation: sound designers are shifting from technical specialists to experience architects. Rather than merely adding sound to images, they construct identity, perception, and user engagement through sonic systems — a role made increasingly valuable as AI becomes capable of generating technically competent sound at scale.

V. The Next Decade of Sound: From Narrative to Presence

If AI is transforming how images are produced, sound is increasingly determining whether digital characters truly feel present.

As digital humans, AI-generated performers, and fully synthetic films continue to proliferate, audience perception of authenticity relies less on visual realism and more on sonic experience. Images can be synthesized and motion can be simulated, but presence — the sensation that something truly exists within a space — is established through sound.

Shen’s recent experimental project Immersive by Your Own Music, exhibited by ArtistTalk Magazine, explores sound as an interactive interface rather than a passive medium. By capturing participants’ voices and physiological rhythms, the system generates personalized environmental music in real time, allowing sound to respond dynamically to human states.

Within this framework, sound is no longer merely something to be heard. It becomes a language through which humans communicate with systems — simultaneously sensing, responding, and shaping emotional perception.

A similar exploration appears in Shen’s work on the VR narrative game Taste of Redemption, presented at New York’s Nil Gallery, where she served as sound designer, producer, and audio engineer. Unlike traditional cinema, the project removes fixed camera perspective and linear storytelling structure. The audience navigates the narrative spatially, and sound becomes the primary mechanism guiding attention, emotion, and orientation within the virtual environment.

In VR, visual information alone cannot sustain immersion. Presence emerges when sonic space reacts convincingly to movement, distance, and psychological pacing. Shen’s approach treats sound not as accompaniment but as environmental architecture — a system that allows virtual characters and spaces to feel inhabited rather than displayed.

For Shen, these experiments point toward a broader shift. The next decade of sound will not be defined by higher resolution, larger channel counts, or technical fidelity alone, but by the emergence of sound as an interactive language — one capable of mediating relationships between humans, machines, and digital identities.

As AI increasingly mass-produces technically acceptable audio, rarity shifts elsewhere. What becomes valuable are creators capable of establishing presence, authorship, and emotional connection through listening itself.

VI. A Sound Designer at an Industry Turning Point

Viewed across her career trajectory, Shen’s work reveals a consistent pattern: participation at moments when the structure of the sound industry itself is evolving. Rather than remaining confined to a single medium, her practice moves alongside technological transition — from large-scale broadcast environments to AAA games, experimental spatial media, and emerging AI cinema.

As artificial intelligence assumes an increasing share of technically “correct” production tasks, the role of leading sound designers shifts toward interpretation, authorship, and decision-making under uncertainty. Shen’s trajectory illustrates how sustained experience across complex production systems enables creators to adapt without relinquishing artistic intention.

In this emerging landscape, technical access becomes widespread, but judgment remains rare. The future of sound design may therefore depend less on tools than on practitioners capable of defining meaning within rapidly changing media environments.

AI may reshape production infrastructure, yet authorship at its highest level continues to depend on human perception.

In the era of generative cinema, the rarest resource is no longer technology.

It is judgment.