Meta is installing tracking software on the computers of its US-based employees that will capture their mouse movements, clicks, keystrokes and periodic screenshots of their screens, the company told staff in internal memos seen by Reuters on April 21, 2026.

The data will be fed into Meta’s artificial intelligence models as training material.

The stated goal is to build AI agents capable of performing computer-based work tasks autonomously, the same tasks the employees generating the data currently perform.

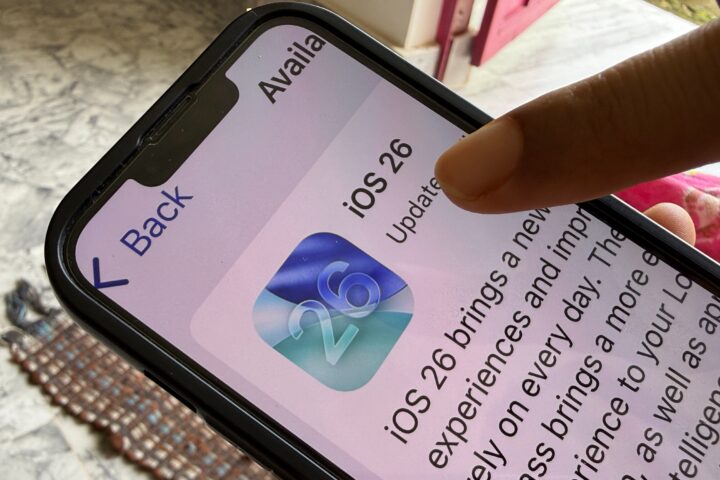

The tool is called the Model Capability Initiative, or MCI. It will run on a specific list of work-related apps and websites.

A staff AI research scientist posted the announcement in an internal channel for Meta SuperIntelligence Labs, the company’s model-building division.

The memo told employees the initiative was aimed at improving AI models in areas where they currently struggle, specifically, things like choosing from dropdown menus and using keyboard shortcuts.

“This is where all Meta employees can help our models get better,” the memo said, “simply by doing their daily work.”

Meta spokesperson Andy Stone confirmed the data would not be used for performance evaluations and said safeguards were in place to protect “sensitive content,” without specifying what would be excluded from collection.

What Will The Data Be Used For?

The problem Meta is trying to solve is a specific and increasingly pressing one across the AI industry.

Building an AI agent that can autonomously operate a computer, navigating apps, clicking through menus, filling forms, switching between programs, requires training data that shows exactly how humans do those things.

You cannot generate that data synthetically in a way that captures the full texture of real computer use. You need actual recordings of humans actually working.

Meta’s employees are a captive and abundant source. By running MCI in the background of normal workdays, Meta harvests continuous demonstrations of human-computer interaction across thousands of workers doing real professional tasks.

Each time an employee selects from a dropdown, triggers a keyboard shortcut, or navigates between applications, the software logs it.

The occasional screen snapshots provide context for what the mouse and keyboard activity was accomplishing.

The vision for what that data enables was spelled out by Meta’s CTO Andrew Bosworth in a separate memo sent to employees the day before the MCI announcement.

Bosworth told staff that the company would be stepping up its internal data collection as part of its broader AI for Work initiative.

“The vision we are building towards is one where our agents primarily do the work,” Bosworth wrote, “and our role is to direct, review and help them improve.”

That sentence is the clearest description yet of what Meta intends to build: AI systems that do the jobs, with humans reduced to oversight and correction roles.

The Legal Wall Around Non-US Employees

The MCI program applies specifically to US-based employees, and that geographic boundary is not incidental.

European law makes what Meta is doing in the US significantly harder, and in some cases impossible, to replicate elsewhere.

In Italy, using electronic monitoring to track employee productivity is explicitly illegal.

In Germany, courts have established that keystroke logging by employers is permissible only in exceptional circumstances, specifically when there is suspicion of a serious criminal offense.

More broadly, the European Union’s General Data Protection Regulation would likely classify this kind of systematic behavioral surveillance of employees as a violation, according to Valerio De Stefano, a law professor at York University in Toronto who studies technology and comparative labor law.

De Stefano also raised a concern that goes beyond legal compliance. When employees are aware they are being monitored in this way, he said, it shifts the balance of workplace power toward the employer, not just in the data relationship but in the practical dynamics of how people work and what they feel comfortable doing on their own computers during the workday.

Meta’s solution, for now, is to limit the program to the United States, where federal law does not prohibit this kind of employee monitoring and where most of the legal constraints are either minimal or practically unenforced in the tech sector.

The US workforce at Meta becomes the training set. The rest of the world watches from outside the data collection boundary.

The Bigger Race For Training Data

Meta is not alone in the urgency of finding new training data sources. The most accessible public text and image data on the internet has already been harvested by the major AI labs.

The next frontier is behavioral and professional data, the kind that shows how humans actually perform knowledge work, not just what they have written about it.

In January 2026, OpenAI was reported to be asking third-party contractors, through a training data firm called Handshake AI, to upload samples of real work products from their previous jobs, actual PowerPoint presentations, spreadsheets and similar documents, with instructions to remove confidential material before submitting them.

The approach raised its own concerns about what “scrubbed” actually meant in practice and who was responsible for ensuring nothing sensitive made it into the training pipeline.

Meta’s approach is more systematic and more direct: instead of asking workers to submit old files, it is installing software that continuously records the work as it happens.

The scale is different, the intimacy is different, and the degree of employee agency is different.

With the OpenAI approach, contractors chose to participate and chose which documents to submit. With MCI, Meta employees generate training data by simply opening their laptops.

Meta acquired a 49 percent stake in Scale AI, a data labeling company, last year for more than $14 billion.

Scale’s former CEO Alexandr Wang now leads Meta SuperIntelligence Labs, the division that oversees the MCI program and that was founded in June 2025 with approximately 3,000 staff drawn partly from aggressive hiring away from OpenAI, Anthropic and Google DeepMind.

Wang’s background is in exactly the kind of data infrastructure that feeds AI models. His presence at the head of Meta SuperIntelligence Labs is the organizational expression of how seriously Meta is treating the data supply problem.

What Employees Are Actually Generating

The memo’s framing, that employees can help “simply by doing their daily work,” is technically accurate and strategically useful. It asks nothing additional from workers and positions their contribution as passive and incidental.

What is being generated is not incidental. It is a granular record of how a human professional navigates a computer across an eight-hour workday: which applications they use, in what sequence, how they move between tasks, what keyboard patterns they have developed, how they handle routine versus novel decisions.

Across thousands of employees doing different kinds of work, that dataset begins to look like a comprehensive curriculum for training an AI to replicate white-collar computer work.

The memo acknowledged that the models currently struggle with basic interface tasks, dropdown menus, keyboard shortcuts.

These are not complex behaviors. They are the lowest-level mechanical operations of computer use.

The fact that AI agents still struggle with them illustrates both how hard the problem is and how much data is required to solve it.

Watching humans do it thousands of times, in context, is apparently the most efficient path.

Meta has framed the program as a training initiative with employee safeguards.

Critics and legal scholars are framing it as surveillance of workers for the purpose of building systems designed to make those workers unnecessary.

[…] artvoice.com — Meta Is Recording Its Employees' Keystrokes And Mouse Clicks To Train AI T… […]