Cerebras Systems began trading on the Nasdaq Global Select Market on Wednesday May 14, 2026 under the ticker CBRS, the most anticipated AI chip IPO of the year and one of the largest technology IPOs in recent memory.

The company priced its shares at $185 the night before, raising at least $5.55 billion from the sale of 30 million Class A shares.

By the time trading opened Wednesday morning, shares were being indicated at approximately $350, nearly double the IPO price, giving the company an implied valuation approaching $80 billion on a day when it had not yet recorded a single trade as a public company.

Cerebras CEO Andrew Feldman described his company’s core technology in the specific terms that explain why the AI investment community has been waiting for this IPO.

“We built a chip the size of a dinner plate,” he told Yahoo Finance Thursday morning. “It’s 58 times larger than any chip previously built. In AI, bigger chips are faster.”

That is the product pitch. The business pitch is that Cerebras has a $20 billion multi-year contract with OpenAI, generated $510 million in revenue and $238 million in net profit in 2025, and is one of the only AI chip companies in the world that is both growing rapidly and profitable.

The IPO was 20 times oversubscribed. Arm Holdings and SoftBank approached the company with a preliminary acquisition offer before the offering. Cerebras proceeded with the IPO anyway.

What Is Cerebras?

The AI chip market is dominated by Nvidia in a way that the word dominant does not fully convey.

Nvidia’s CUDA software ecosystem, the programming framework that allows developers to write AI code for Nvidia GPUs, is so deeply embedded in the tools, workflows and training pipelines of the AI industry that switching away from it is a multi-year project that most organizations are not willing to undertake.

Nvidia’s market share in AI data center accelerators exceeds 90 percent. The question “who is the Nvidia of AI chips” has one answer.

Cerebras is not trying to be the Nvidia of AI chips. It is trying to own the specific part of AI computing where Nvidia’s GPU architecture has inherent limitations, inference speed and latency at the extreme end of performance requirements.

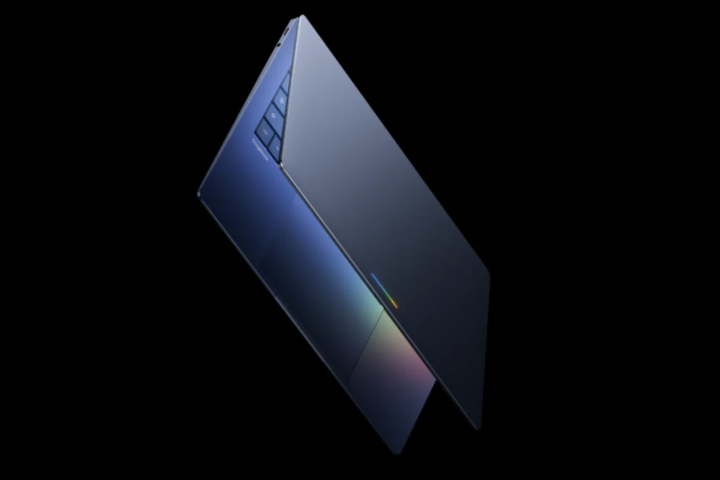

The Wafer-Scale Engine 3, Cerebras’s flagship product, is physically constructed differently from any chip Nvidia or any other GPU manufacturer produces.

Where conventional chips are the size of a fingernail, the WSE-3 encompasses an entire silicon wafer.

The result is 58 times the area of a leading GPU chip. More area means more compute units connected at shorter distances with more memory bandwidth, which translates directly into inference speed.

Cerebras claims the WSE-3 can run AI inference up to 15 times faster than leading GPU-based solutions on leading open-source models, while using a fraction of the power per unit of compute.

The specific use case where that matters most is real-time AI inference at scale, situations where the speed of the AI’s response is itself valuable.

OpenAI’s investment in Cerebras infrastructure, 750 megawatts of ultra-low-latency AI compute, with capacity coming online in multiple tranches through 2028, reflects a bet that some portion of its AI workloads are better served by Cerebras’s approach than by Nvidia’s GPU farms.

The IPO That Almost Did Not Happen

Cerebras first filed to go public in 2024. The filing attracted national security scrutiny because of the company’s Abu Dhabi-based customer G42, which at the time represented 87 percent of Cerebras’s first-half 2024 revenue.

A federal review of the G42 investment relationship caused the company to withdraw the offering. The IPO became a story about geopolitical risk and customer concentration rather than about the technology.

The 2026 version is a different story. The center of gravity shifted from Abu Dhabi to Silicon Valley.

OpenAI and Amazon Web Services replaced G42 as the defining customer relationships.

The May 4, 2026 S-1 amendment put a $20 billion OpenAI deal at the front of the offering document.

Cerebras was no longer primarily a chip company with a Middle Eastern customer. It was an AI infrastructure company with OpenAI’s name on the contract.

The re-filing was received immediately and substantially. The company proposed 28 million shares at $115 to $125. By May 11, it had upsized to 30 million shares at $150 to $160.

The final price of $185, above the top end of the second range, was set on May 13.

The 20-times oversubscription that drove three successive price increases over the span of one week reflects genuine institutional demand rather than retail enthusiasm.

Before the offering could close, Arm Holdings and SoftBank Group made a preliminary approach about acquiring Cerebras. The company’s response was to proceed with the IPO.

That decision, choosing the public market and its ongoing obligations over a private acquisition, is the specific choice that makes Cerebras’s management team either right about how large the company can become or very patient about realizing their returns.

The Financial Picture Behind The Hype

The specific thing that distinguishes the Cerebras IPO from most high-profile technology listings is that the company is profitable.

In 2025, Cerebras generated $510 million in revenue, up 76 percent year-over-year, and $238 million in net income, a 47 percent net margin.

For an AI chip company with the growth profile and market positioning of a startup, those numbers are unusual.

The bear case, and there is a meaningful bear case, centers on concentration. OpenAI represents a substantial portion of Cerebras’s projected future revenue under the terms of the Master Relationship Agreement.

Mohamed bin Zayed University of AI represented 62 percent of 2025 revenue. G42 still represented 24 percent of 2025 revenue despite the diversification effort.

A company where three customers account for essentially all of its revenue is a company whose financial profile is highly sensitive to the decisions of those three customers.

The bull case is that the OpenAI relationship is not merely a customer contract. It is an architectural partnership, Cerebras and OpenAI have agreed to co-design future AI models for future Cerebras hardware.

If that co-design relationship produces AI systems that run most efficiently on Cerebras infrastructure, the revenue concentration that is today a risk becomes tomorrow’s structural advantage.

At an opening indication of approximately $350 per share, nearly 100 times Cerebras’s trailing revenue, the market is pricing the bull case rather than the bear case.

That multiple asks investors to believe that the $510 million in 2025 revenue is the beginning of a trajectory rather than the ceiling of a concentrated business.

The Context Of The Biggest IPO Of 2026

Cerebras’s listing is the largest IPO of 2026 so far, surpassing Blackstone Digital Infrastructure Trust and Fervo Energy, which were also among the year’s notable offerings.

The $5.55 billion raised at IPO price, and the substantially higher market capitalization implied by the opening trading indication, places it among the significant technology public market debuts of recent years.

It is also the most direct public test of whether the AI semiconductor market has room for a company positioned explicitly as an Nvidia alternative for specific workloads.

The companies that need the fastest possible AI inference, and that can structure their infrastructure around a non-CUDA architecture, are the customers Cerebras is built for.

OpenAI’s willingness to commit $20 billion to that infrastructure is the strongest available external validation that those customers exist and that Cerebras can serve them.

Whether the company is worth what the opening trading indication says it is worth depends on whether that $20 billion OpenAI commitment converts to actual recognized revenue over the next several years, whether Cerebras successfully diversifies its customer base beyond its current concentration, and whether the WSE-3’s technical advantages over GPU-based inference persist as Nvidia and AMD continue to develop their own architectures.

The dinner-plate chip is trading on Nasdaq. The market’s initial answer to what it is worth is nearly double what the company asked.